Products

FabreX Universal Dynamic Fabric Overview

FabreX Universal Dynamic Fabric Overview

FabreX is the only fabric which enables complete disaggregation and composition of all your resources in the rack. In addition to composing resources to servers, only FabreX can compose your servers over PCIe (and CXL in the future), instead of introducing the cost, complexity and latency hit from having to switch to Ethernet or InfiniBand within the rack.

Rack-Scale Computing Made Simple

Universal dynamic fabric FabreX delivers on the promise of rack-scale computing by breaking the server chassis barrier. You can share and compose on the fly all the components in a rack and beyond, based on the demands of individual workloads. For ease of deployment, the GigaPod Engineered Solutions include NVIDIA Base Command Manager and everything you need to painlessly scale the basic GigaPod™ to GigaClusters™ and beyond to deliver on the promise of the agility of the cloud at a fraction of the cost.

Composition Software

Composition Software

One of the key advantages of a FabreX-powered composable infrastructure is your freedom of choice when it comes to how you want to disaggregate and recompose your rack resources. From the utmost in control via our robust CLI (Command Line Interface) and RedFish interfaces to just drop in your existing DevOps workflow, to a unified, single pane of glass, to manage your entire infrastructure seamlessly within a GUI with advanced and automated resource scheduling features with NVIDIA Base Command Manager or SuperCloud Composer, you get to choose the tool best suited for your environment.

FabreX Software

Our host software enables server-to-server communication over FabreX for protocols such as NVMe-oF, MPI, Libfabric, and TCP/IP. It is open-source, supports all popular Linux installations and can be readily downloaded from our support portal.

Our switch software engine drives the performance and dynamic composability of GigaIO’s composable disaggregated infrastructure (CDI) for enterprise data centers and high-performance computing environments.

Fabric Switch

Fabric Switch

The GigaIO Fabric Switch is the fundamental building block of GigaIO’s AI fabric, enabling true Software Defined Infrastructure (SDI). Our latest Fabric Switch features 6.1Tb/s switch capacity with industry-leading sub-130ns latency.

Fabric management is administered using DMTF open-source Redfish® RESTful APIs that provide an easy-to-use interface for configuring computing clusters on-the-fly.

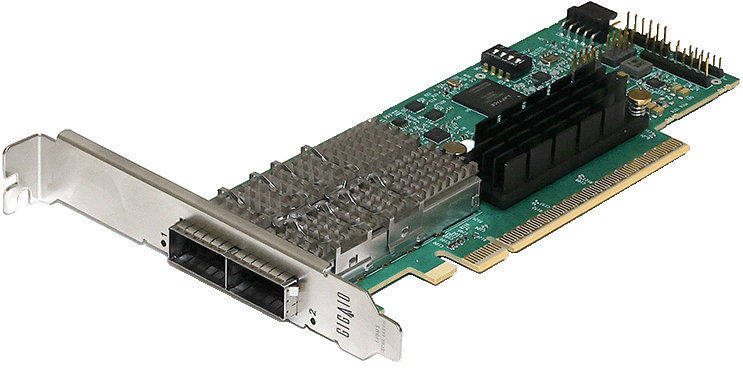

Fabric Card

Fabric Card

The GigaIO Fabric Card is the high-performance, cabled interface to cluster subsystems across our AI fabric hyper-performance network. The adapter card includes both host and target (for PCIe I/O) modes. With the card installed, applications can access remote PCIe devices as if they are attached to the local system.

All elements on the AI fabric are interconnected using standardized, robust and easy-to-use Copper and Active Optical cables. These cabling solutions support connection lengths ranging from 1m to 30m.

Managed Accelerator Pooling Appliance

Managed Accelerator Pooling Appliance

The GigaIO™ Accelerator Pooling Appliance is the industry’s highest performing PCIe accelerator appliance, which fully supports PCIe Gen5 with up to 2.048Tb/s total bandwidth dedicated to host server upstream connections.

This flexible expansion platform enables users to add any PCIe Gen5 application accelerators; including GPUs, FPGAs, IPUs, DPUs, thin NVMe servers and specialty AI chips. It also delivers a whopping 6400W of fully redundant power to feed even the most power-hungry accelerators.

Managed High Performance Storage Pooling Appliance

This expansion chassis is perfect to create your high performance Flash Array JBOF (Just a Bunch of Flash) based on NVMe technology or even computational storage units. It can include up to 32 2.5” drives, and 1+1 redundant 1000W high efficiency 80 Plus Titanium PSUs to provide high throughput and low latency for resource sharing and high availability.