FabreX: Not Your Father’s PCIe

Ever heard of PCIe, the protocol that all computer components “speak” natively, being taken outside the server chassis? Isn’t PCI just a bus protocol, like SCSI? Well at GigaIO we have figured out how to break the chassis barrier and taken PCIe outside the box to connect servers to other resources, and servers to servers, all in native PCIe.

So what, you might say? In a word, performance. Now imagine we are all speaking English, as all components of a computer system “speak” PCIe, but in order to communicate with one another, we were forced to first talk to say a French speaker, who would then repeat our sentence to another French speaker, before it was finally communicated back in English to a fourth person.

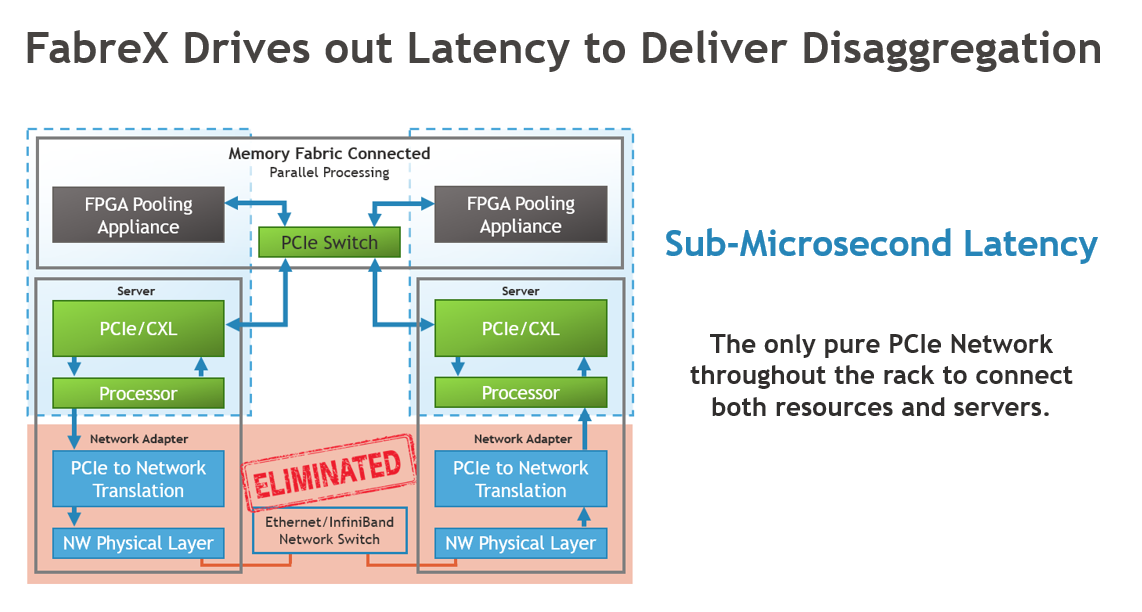

This is a crude metaphor of what happens today in a computer rack in a data center. The CPU speaks PCIe to its I/O port, then the signal is “translated” into either Ethernet or InfiniBand-speak to move from box to box, only to be translated back into PCIe when it reaches the destination (storage, accelerators, another server – PCIe is the only language they understand).

This matters to system performance because each translation point introduces latency and software overhead, but also opportunities for security breaches as the data is briefly stored at each hop. Data traveling on a PCIe network never stops.

GigaIO’s FabreX™ is the only routable PCIe network solution on the market today, with the industry’s lowest latency and highest performance not only from a server to its resources, but also server to other servers throughout the rack, and beyond. How is that possible?

To dive into what makes our implementation so ingenious, read “FabreX: a primer”.

To find out why a PCIe network maximizes security, read “For the Security-first Data Center, make your network end-to-end PCIe”